Ethical AI in Cybersecurity: GDPR Compliance Guide

Artificial intelligence is a powerful tool in modern cybersecurity, but it must comply with GDPR to protect user privacy and avoid costly penalties. Here’s what you need to know:

- GDPR applies to all AI-driven data processing, including monitoring, behavior analysis, and automated threat responses.

- Non-compliance risks fines up to $21.7 million or 4% of global revenue.

- Key GDPR principles include:

- Transparency: Users must know when AI is involved and understand its decisions.

- Data minimization: Only collect and process data necessary for specific tasks.

- Human oversight: Automated decisions require human review for fairness and accountability.

- Tools like SHAP and LIME can explain AI decisions, while technologies like federated learning and differential privacy protect data during processing.

- Conducting Data Protection Impact Assessments (DPIAs) is mandatory for high-risk AI systems.

The article outlines actionable steps to ensure compliance, from embedding privacy into AI design to securing systems against cyber threats. Prioritizing GDPR compliance not only avoids fines but also builds trust with users.

10 Key Challenges for AI within the EU Data Protection Framework

sbb-itb-804866a

GDPR Principles for AI Cybersecurity Tools

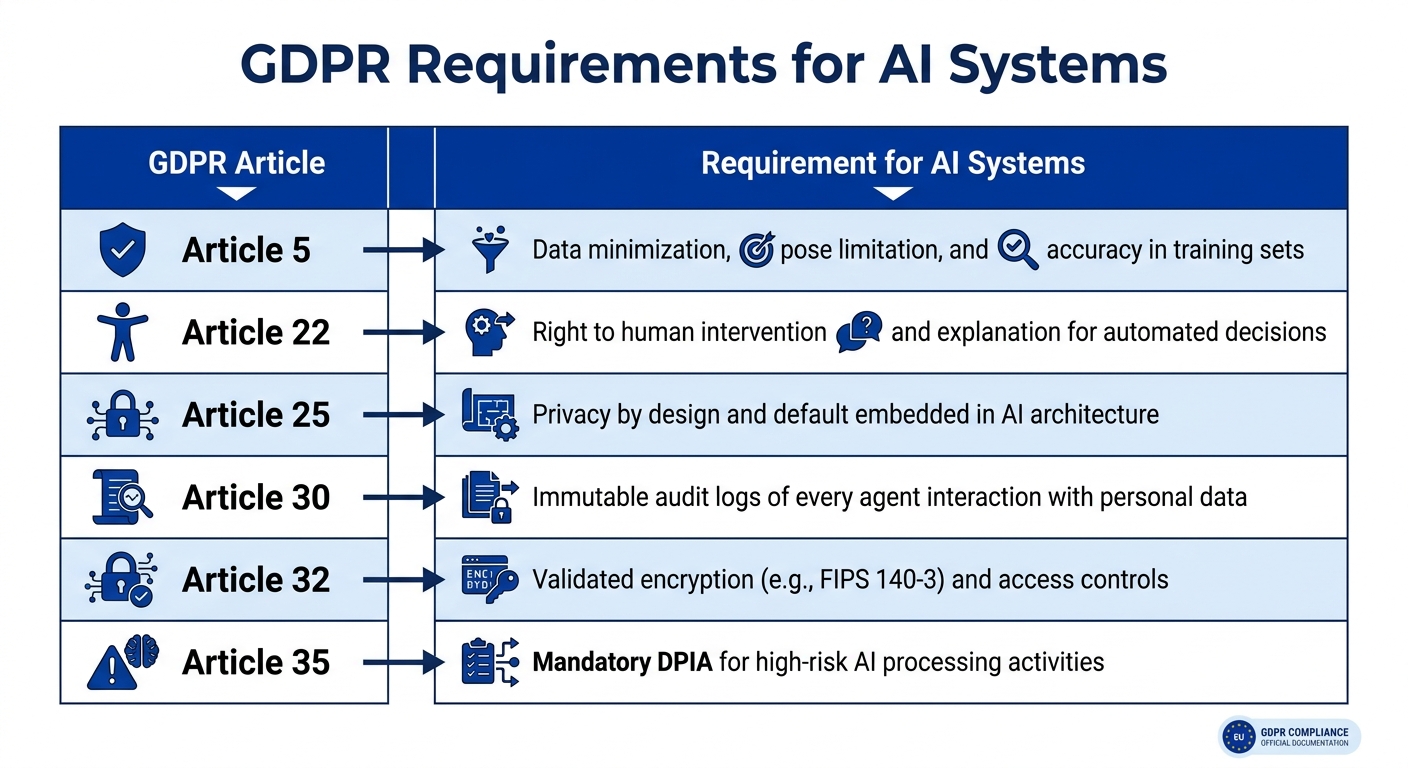

GDPR Articles and AI System Requirements for Compliance

When it comes to AI in cybersecurity, adhering to GDPR principles is essential. These guidelines not only help protect sensitive data but also ensure user trust. Every step of AI processing - whether analyzing network traffic, detecting threats, or automating responses - must align with these rules.

Lawfulness, Fairness, and Transparency

AI systems need a valid legal basis for processing data. This could be user consent, fulfilling a contract, or legitimate interest. In cybersecurity, legitimate interest is a common justification, but it must be documented through a Legitimate Interest Assessment to confirm its necessity and proportionality.

Transparency is another key principle. Users should always know when they’re interacting with an AI system instead of a human, and this disclosure must happen before the interaction starts. If the AI makes automated decisions - like flagging an account or blocking access - it’s crucial to provide clear, understandable information about how that decision was made. This includes detailing the data categories and the factors influencing the decision.

Fairness means avoiding discriminatory outcomes in AI algorithms. For example, in early 2026, OpenAI was fined around $16.3 million (€15 million) by the Italian Garante for transparency and legal basis violations. Similarly, the Dutch Data Protection Authority fined Clearview AI approximately $33.2 million (€30.5 million) for similar issues. To prevent such problems, regular bias audits using tools like Aequitas, AI Fairness 360, or Fairlearn can help ensure algorithms treat all user groups fairly.

For decisions with major impacts - such as denying access or flagging users - Article 22 of GDPR requires human oversight and gives users the right to challenge automated decisions. Explainable AI (XAI) tools like SHAP or LIME can help make these decisions understandable by generating human-readable explanations.

Next, let’s look at the principles of limiting data collection to what’s absolutely necessary.

Data Minimization and Purpose Limitation

Under GDPR, AI systems should only process the data needed for a specific purpose. For instance, an AI tool analyzing login patterns shouldn’t have access to users’ full transaction histories or behavioral profiles. Attribute-Based Access Control can help restrict AI actions to only what’s authorized.

The principle of purpose limitation ensures that data collected for one task isn’t reused for another without proper legal basis or fresh consent. A study found that 38% of companies violated this rule by repurposing chatbot data for advertising. For example, if network logs are gathered for threat detection, they can’t later be used to train a marketing algorithm without explicit user consent.

To minimize data usage, technical teams can employ feature selection methods to identify the smallest dataset necessary for effective AI performance. Instead of exact birth dates, age ranges can be used. Similarly, anonymized IP addresses can replace precise ones. Techniques like pseudonymization (e.g., using HMAC/SHA256 for user IDs) further reduce privacy risks.

Automated retention policies are critical for managing data. Files and logs should be deleted as soon as they’re no longer needed. Retention periods should be clearly defined, such as keeping anonymous logs for 90 days and deleting sensitive data immediately.

These practices pave the way for maintaining data integrity and confidentiality.

Integrity and Confidentiality

Articles 5(1)(f) and 32 of GDPR mandate strong measures to protect data from breaches and unauthorized access. This includes using FIPS 140-3 validated encryption for both stored and transmitted data, along with strict access controls. Tamper-evident audit logs should track every interaction with data, recording details like agent identity, purpose, timestamp, and accessed data.

The stakes are high: 71% of consumers say they wouldn’t do business with a company after a data breach. GDPR penalties for severe violations can reach $21.7 million (€20 million) or 4% of global annual revenue. Regularly scanning AI knowledge bases with automated tools can help detect and address any exposed personal data.

Privacy-enhancing technologies like federated learning, differential privacy, and homomorphic encryption allow AI models to be trained without exposing raw personal data.

| GDPR Article | Requirement for AI Systems |

|---|---|

| Article 5 | Data minimization, purpose limitation, and accuracy in training sets |

| Article 22 | Right to human intervention and explanation for automated decisions |

| Article 25 | Privacy by design and default embedded in AI architecture |

| Article 30 | Immutable audit logs of every agent interaction with personal data |

| Article 32 | Validated encryption (e.g., FIPS 140-3) and access controls |

| Article 35 | Mandatory DPIA for high-risk AI processing activities |

Ethical AI Practices and Governance

Creating an ethical AI framework goes beyond meeting regulatory requirements - it's about building systems that people can genuinely trust. To achieve this, organizations need clear principles, structured risk assessments, and solid human oversight to ensure their AI-powered cybersecurity tools act responsibly.

Defining Ethical AI Principles

An ethical AI framework should rest on seven key pillars: human agency and oversight, technical robustness and safety, privacy and data governance, transparency and explainability, diversity and fairness, societal and environmental well-being, and accountability. These principles should be embedded into the system's design from the outset, a practice often referred to as ethics by design.

To ensure ethical practices, organizations should implement review processes and maintain detailed "decision logs" documenting trade-offs made during system development. For instance, if a system must prioritize between quicker threat detection and stronger privacy safeguards, the decision and its reasoning should be recorded.

Ethical considerations must also align with established legal standards like GDPR. This isn't just theoretical - real-world consequences exist. For example, the Italian Data Protection Authority fined Clearview AI €20 million (around $21 million) for scraping public images without proper legal consent.

Conducting AI Risk Assessments

Building on ethical principles, organizations need to assess risks systematically. These risk assessments should be ongoing, adapting as AI systems and cybersecurity threats evolve.

A six-step lifecycle can guide this process: inventory and classification, risk identification, risk analysis (scoring), risk evaluation (prioritization), risk treatment (mitigation), and continuous monitoring.

Start by classifying your AI system according to the EU AI Act, which applies to high-risk systems beginning August 2, 2026. Systems involved in profiling, large-scale automated decision-making, or critical cybersecurity functions often fall under the "High Risk" category, requiring a comprehensive compliance framework. Additionally, GDPR Article 35 mandates a Data Protection Impact Assessment (DPIA) for such systems.

To quantify risks, use a 5x5 Likelihood vs. Impact matrix. Scores in the 20–25 range (Critical) should halt deployment until mitigated. Risk assessments should address technical, ethical, legal, operational, and reputational factors. Data shows that organizations with mature AI risk practices experience 60% fewer AI-related failures, and by 2025, 78% of enterprise buyers will demand documented AI risk assessments from vendors.

Responsibility for these practices lies with senior management and Data Protection Officers (DPOs), who need to grasp how AI's complexities impact data protection. Non-compliance with prohibited AI practices can lead to fines of up to €35 million (around $38 million) or 7% of global annual revenue.

Ensuring Human Oversight and Explainability

Effective human oversight is critical to managing risks identified through structured evaluations. This step complements GDPR principles and the risk assessment process, reinforcing the balance between compliance and strong cybersecurity.

Human oversight must be genuine. A "human in the loop" who merely rubber-stamps AI recommendations without real authority does not meet GDPR Article 22 standards. Establish clear workflows for escalating borderline AI decisions to manual review. For decisions with significant legal or personal impacts - like blocking user access or initiating account investigations - individuals have the right to request human intervention, express their views, and challenge the decision.

Organizations must also provide "meaningful information about the logic involved" in AI decisions. This includes detailing the types of data used, their relevance, and the main factors influencing the outcome. Tools like SHAP or LIME can help explain which features drive specific outputs.

Finally, maintain tamper-evident audit trails that link each AI interaction involving personal data to the responsible human authorizer. This documentation not only ensures accountability but also helps mitigate risks like automation bias and the loss of human expertise.

Data Protection by Design and DPIAs for AI Systems

To avoid expensive retrofits and compliance issues, organizations should incorporate GDPR requirements into AI systems from the very beginning. This means embedding privacy protections into the design phase and systematically assessing risks before processing any data.

Privacy by Design and Default

Article 25 of the GDPR mandates Privacy by Design (PbD), requiring organizations to integrate data protection measures into their AI systems from the outset rather than adding them later. According to the UK Information Commissioner’s Office, data protection should be "baked into" both processing activities and business practices throughout the system's lifecycle.

The PbD framework is built on seven core principles: proactive measures, default privacy settings, embedded design, maintaining full functionality, end-to-end security, transparency, and respect for user privacy. For instance, in March 2023, a SaaS company limited trial data collection to just email addresses and company names, while also automatically deleting inactive trial data after 30 days. This approach ensured full GDPR compliance and significantly reduced the risk of data breaches.

The stakes for non-compliance are steep. By January 2025, cumulative GDPR fines had reached an estimated $6.4 billion, underscoring the seriousness with which regulators enforce these rules. Organizations that fail to document and implement a Privacy by Design strategy risk facing regulatory action.

To align with these requirements, design your AI systems to collect only the data that is strictly necessary, encrypt data by default, and implement automatic deletion policies. As Danielle Barbour from Kiteworks points out, "A system prompt instructing a model to handle data carefully does not constitute privacy by design".

Building privacy into the system from the start sets the foundation for a robust Data Protection Impact Assessment (DPIA), which is the next critical step.

Executing DPIAs for AI Cybersecurity Tools

Once privacy measures are embedded, conducting a formal DPIA ensures ongoing compliance and helps mitigate risks. GDPR Article 35 requires a DPIA for processing activities that are likely to pose high risks to individuals, and AI systems almost always meet this threshold due to their reliance on advanced technologies, profiling, and large-scale data processing.

Unlike a standard Privacy Impact Assessment, which focuses on organizational risks, a DPIA is designed to identify and address risks to individuals’ rights and freedoms. For AI-driven cybersecurity tools, this includes tackling issues like algorithmic bias in threat detection, the lack of explainability in "black box" models, challenges with data minimization, and the potential for inferring sensitive information from seemingly non-sensitive inputs.

To determine whether a DPIA is necessary, start with a screening checklist. If your AI system meets two or more of the nine European Data Protection Board (EDPB) criteria - such as profiling combined with innovative technology - you must proceed with a full DPIA. The Data Controller is responsible for conducting the DPIA, often with input from the Data Protection Officer. According to an IAPP survey, 73% of organizations conducting DPIAs identified at least one major risk that required mitigation.

The DPIA process typically involves seven steps:

- Screening to determine if a DPIA is required.

- Mapping out data flows and the system’s architecture to describe processing activities.

- Evaluating the necessity of processing through proportionality checks.

- Identifying risks to individuals, such as bias, discrimination, or lack of transparency.

- Defining mitigations, including technical measures like encryption, explainable AI, and human oversight.

- Securing sign-off from the Data Protection Officer and senior management.

- Establishing regular review cycles to keep the DPIA updated.

DPIAs should be reviewed and updated every three years or whenever significant changes occur.

Failing to complete a mandatory DPIA can result in fines of up to $10.9 million or 2% of the organization’s global annual turnover, whichever is greater. As Datasumi aptly notes, "The DPIA emerges not merely as a compliance exercise, but as the primary legal and ethical mechanism for identifying, comprehending, and mitigating the heightened risks posed by AI".

Securing AI Systems Against Cyber Threats

Building privacy into AI systems is just one part of the equation. The other, equally critical, aspect is protecting these systems - and the sensitive data they handle - against cyber threats. Once privacy by design is established, the focus shifts to implementing targeted cybersecurity measures that guard AI systems and their data from evolving risks.

Cybersecurity Measures for AI Systems

To safeguard AI systems effectively, start with strong encryption standards. For stored data, use FIPS 140-3 Level 1 validated encryption, and for data in transit, implement TLS 1.3 - especially when handling high-risk personal information. Beyond basic folder-level permissions, adopt Attribute-Based Access Control (ABAC) at the operational level to ensure AI agents only access the precise data required for their tasks. These measures not only strengthen defenses but also align with GDPR Article 32 requirements.

Another key measure is network isolation. Deploy AI systems within a private Virtual Private Cloud (VPC) that lacks public internet access, minimizing exposure to external threats.

Regularly test for vulnerabilities through quarterly adversarial testing. Simulate attacks like prompt injection (where malicious commands bypass safety measures), model inversion (which infers training data from outputs), and data leakage between users. Given the complexity of popular machine learning frameworks - some with over 130 external dependencies and nearly 887,000 lines of code - monitor third-party and open-source libraries for potential risks.

Here’s a breakdown of common attack types and strategies to mitigate them:

| Attack Type | Description | Mitigation Strategy |

|---|---|---|

| Prompt Injection | Embedding harmful instructions in user queries to bypass safety filters | Use robust prompts, input validation, and adversarial testing |

| Model Inversion | Inferring training data from model outputs | Apply differential privacy and reduce confidence score granularity |

| Membership Inference | Identifying if specific data was used in training | Prevent overfitting and use synthetic datasets |

| Model Poisoning | Injecting false or biased data into training sets | Validate new training data with human oversight |

Once system defenses are in place, it’s time to focus on securing the data these systems process.

Protecting Data in AI Processing

Filtering inputs and outputs is a must to prevent accidental data leaks. Tools like Microsoft Presidio or AWS Macie can automatically redact sensitive information from AI responses. Input validation is equally important - patterns like "ignore previous instructions" should raise red flags and trigger alerts. For testing and development, rely on synthetic data that mimics production datasets without containing actual personal information.

Privacy-enhancing technologies are another essential layer of defense. Differential privacy, for instance, introduces mathematical noise to training data, making it impossible to trace individual data points back to the model. As Dr. Aaron Roth from the University of Pennsylvania explains:

"Differential privacy represents a paradigm shift in how we think about data protection in AI. It's not about access controls or encryption - it's about fundamentally limiting what can be learned about individuals from the model itself."

Federated learning adds another layer of protection by training models across multiple locations without transferring raw personal data. This minimizes risks tied to international data transfers. For highly sensitive workloads, consider confidential computing with hardware-protected enclaves like Intel SGX or AMD SEV, which process data in isolated environments.

To further reduce vulnerabilities, avoid overfitting during model training. Overfitting increases the risk of attacks like membership inference and model inversion. For example, researchers have shown that facial images from training datasets can be reconstructed with 95% accuracy using model inversion techniques on facial recognition systems. Additional safeguards include implementing rate limits on API queries to block data extraction attempts and automating the deletion of temporary files and logs within 30 to 90 days to comply with storage limitations.

Using GDPR-Compliant Tools Like Eleidon

Once you've put strong cybersecurity measures and privacy-focused technologies in place, the next step is choosing a tool that aligns with GDPR regulations. This is where platforms like Eleidon come in. Designed with cryptographic security at its core, Eleidon not only simplifies GDPR compliance but also strengthens your overall security framework.

Eleidon Features for GDPR Compliance

Eleidon addresses key GDPR requirements with its cryptographic messaging system, which uses Ed25519 digital signatures and a public key registry to establish trust and prevent spoofing. Every message is end-to-end encrypted and cryptographically verified, meeting GDPR Article 32's standards for data integrity and confidentiality.

What sets Eleidon apart is its privacy-first design. Private keys are generated and stored on the client side, never transmitted to the Eleidon API. This ensures that your organization retains full control over cryptographic keys, eliminating the risk of third-party access.

The platform also provides cryptographic audit trails, offering a permanent, verifiable record of message authenticity. These trails help meet GDPR's accountability requirements and make it easier to demonstrate compliance during audits. Additionally, Eleidon's system allows organizations to verify emails before any AI processes them, reducing the risk of unauthorized or fraudulent data handling.

Eleidon introduces a Confidence Score system for message verification, offering more detailed insights than traditional pass/fail protocols. Verified messages from agents with verified inboxes score 1.0, valid signatures from unverified inboxes score 0.7, and fraudulent or unknown senders score 0.0. This detailed scoring system empowers compliance teams to make smarter decisions about data processing.

These features are available across several plans, catering to various organizational needs.

Eleidon Plans Comparison for GDPR Compliance

Eleidon offers flexible plans to suit different compliance requirements and organizational scales:

- Personal plan ($0): Ideal for individuals, this plan includes unlimited encrypted messages, end-to-end encryption, and platform access for GDPR-compliant workflows.

- Team plan ($9/user/month): Designed for businesses, it adds custom domain support, centralized team management, and priority support for enhanced control.

- Team + Vault plan ($14/user/month): Includes everything in the Team plan plus encrypted key backup, multi-device sync, and an account recovery guarantee - essential for maintaining cryptographic security when employees leave or devices are lost.

For organizations with high-volume verification needs, Eleidon’s API pricing starts with a free tier (100 verifications/month) and scales to the Platform plan ($89/month for 100,000 verifications/month). This plan includes SLA guarantees and dedicated support, making it a strong option for businesses managing large volumes of AI-generated communications. When choosing a plan, organizations should carefully assess their verification needs to ensure they have the capacity to monitor compliance effectively.

Conclusion

This guide highlights how adopting privacy-by-design principles and maintaining strong oversight not only ensures GDPR compliance but also strengthens customer trust. By focusing on GDPR-compliant AI cybersecurity, organizations can differentiate themselves in a crowded market while addressing persistent vulnerabilities in AI systems. Prioritizing compliance isn't just about avoiding penalties - it offers a distinct competitive edge.

"GDPR isn't just about compliance; it's about building trust. Organizations that embrace privacy-by-design principles in their AI systems gain competitive advantages".

Ignoring compliance can lead to hefty fines and a loss of customer confidence. Consumer behavior makes this clear: data breaches and poor transparency can harm both revenue and customer loyalty.

To move forward, organizations must embed data protection into every stage of their operations - from the initial design phase to everyday practices. This includes conducting Data Protection Impact Assessments for high-risk AI systems, ensuring human oversight of automated decisions, and maintaining tamper-evident audit trails to document how personal data is accessed and processed by AI tools.

As a data controller, your role goes beyond obtaining certifications like SOC 2 or ISO 27001. While these are important, they must be paired with operational compliance. This involves implementing documented processes, enforcing strict access controls, and respecting data subject rights such as the right to erasure or obtaining explanations for decisions.

The intersection of GDPR and the EU AI Act brings new compliance challenges but also unique opportunities. Companies that treat privacy and ethics as central to their strategy - rather than as regulatory burdens - are seeing tangible benefits. For instance, they report 89% fewer regulatory inquiries and can command an 18% premium in market pricing. Leveraging tools designed with advanced cryptographic security and GDPR compliance, like Eleidon, ensures not only the protection of user data but also the resilience of your business amid evolving regulations.

FAQs

When does GDPR Article 22 apply to AI security decisions?

GDPR Article 22 comes into play when automated processes result in decisions that have legal or similarly meaningful impacts on individuals. It grants people the right to opt out of decisions made purely by automated systems, unless specific conditions are met. These conditions include situations where such automation is necessary, legally justified, or based on explicit consent from the individual. The goal is to promote fairness and transparency in how AI-driven decisions are made.

What should a DPIA include for an AI cybersecurity tool?

A Data Protection Impact Assessment (DPIA) for an AI-powered cybersecurity tool should focus on identifying privacy risks, evaluating whether the data processing is justified and proportionate, and examining how it might affect individuals' rights. It should also include a detailed plan for addressing high-risk situations and ensuring adherence to privacy laws, particularly in cases where the processing could significantly impact data subjects.

How can we prevent an AI model from leaking personal data?

To keep AI models from exposing personal data, organizations should take key precautions such as limiting data collection to only what's necessary, implementing a privacy-first design approach, and conducting regular risk evaluations. These actions reduce the chances of security breaches and privacy violations while aligning with GDPR requirements. Protecting sensitive data and adhering to regulations should always be a top priority.